Today, we’ll temporarily move away from assembly programming. It’s time to discuss a theme that I like a lot: articulatory speech synthesis. Simply put, speech synthesis comprises all the processes of production of synthetic speech signals. Currently, the most popular method for such task is the concatenative approach, which yields synthetic speech output by combining pre-recorded speech segments. Such segments, recorded from human speakers, are collected into a large database, or corpus which is segmented based on phonological features of a language, e.g., transitions from one phoneme to at least one other phoneme. A phoneme is the smallest posited structural unit that distinguishes meaning. It’s important to point out that phonemes are not the physical segments themselves, but, in theoretical terms, cognitive abstractions or categorizations of them. In turn, physical segments, referred to as phones, constitute the instances of phonemes in the actual utterances. For example, the words “madder” and “matter” obviously are composed of distinct phonemes; however, in american english, both words are pronounced almost identically, which means that their phones are the same, or at least very close in the acoustic domain.

On the other hand, articulatory synthesis produces a complete synthetic output, typically based on mathematical models of the structures (lips, teeth, tongue, glottis, and velum, for instance) and processes (transit of airflow along the supraglottal cavities, for instance) of speech. Technically, articulatory speech synthesis transforms a vector p(t) of anatomic or physiologic parameters into a speech signal Sv with predefined acoustic properties. For example, p(t) may include hyoid and tongue body position, protrusion and opening of lips, area of the velopharyngeal port, and so on. This way, an articulatory synthesizer ArtS maps the articulatory domain (from which p(t) is drawn) into the acoustic domain (where frequency properties of Sv lie). Computing the acoustic properties of Sv is the task of a special function. Now, using these definitions, the speech inverse problem is stated as an optimization problem, in which we try to find the best p(t) to minimize the acoustic distance between Sv and the output of ArtS.

The solution to the inverse problem is interesting for the following applications:

- Reduction of memory space and bandwidth requirements for storage and transmission of speech signals.

- Low cost and noninvasive comprehension and recollection of data on phonatory processes.

- Speech recognition, by means of transition to the articulatory domain, where signals may be characterized by fewer parameters.

- Retrieving the best parameters for synthesis of high-quality speech signals.

However, because mapping between articulatory and acoustic domains is nonlinear and many-to-one, definition and achievement of acceptable solutions to the inverse problem are not trivial issues. Globally, qualifying a candidate solution follows some type of relation on the acoustical domain. Furthermore, from the family of solutions to the problem, we are frequently interested only in those configurations consistent to descriptions of articulatory phonetics. Several groups have approached this problem. For example, Yehia and Itakura adopted an approach based on geometric representations of the articulatory space, including spatial constraints. Dusan and Deng used analytical methods to recover the vocal tract configurations. Sondhi and Schroeter relied on a codebook technique. Genetic algorithms have also been used, albeit the approach and type of signals studied differ to those used in this research. These later studies mainly investigate relations between articulation and perception on the basis of the tasks of the task dynamic description of inputs to a synthesizer. More recent research recur to control points experimentally measured to a group of speakers, and inversion minimizes the distance between the articulatory model and the referred points, by using quadratic approximations. On our side, we have previously investigated the application of computational intelligence techniques to the speech inverse problem. Concretely, fuzzy rules for modeling the tongue kinematics, neural networks to generate the glottal airflow and genetic algorithms to carry out the overall optimization process. Another novelty of our previous research was the use of the five spanish vowels as target phonemes for inversion.

Synthesis Models

In a broader level, ArtS integrates two models: the articulatory and the acoustic model. An articulatory model represents the essential components for speech production, and its main purpose is computation of the area function A(x, t), which reflects the variation in cross-sectional area of the acoustic tube whose boundaries are located at the glottis and the mouth, respectively. Here, transitions between phonemes are not researched, and thereby the time variable will be dropped from the area function and from the vector p. On its side, an acoustic model specify the transformations between A(x) and the acoustic domain. Naturally, such mapping also requires information about the energy source exciting the tract. According to the acoustic theory of speech production, the target phonemes are considered as the output of a filter characterized by A(x) and excited by a periodic glottal signal.

In this post, we’ll restrict our presentation to the Articulatory Model:

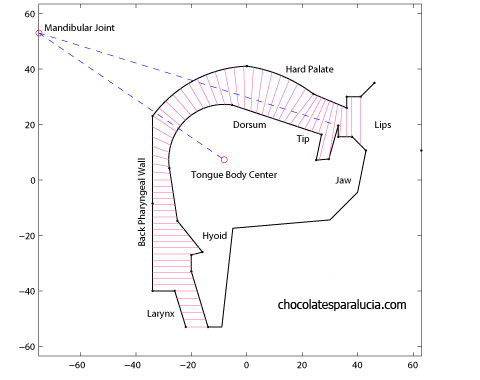

Phonemes of interest can be characterized on the articulatory midsagittal plane. Models of this type consolidated with Mermelstein’s paper, whose metrics and observations have been reutilized by several researchers, mainly for its explicitness and complete explanations. Midsagittal models describe position and movement of articulators on the plane. As the parameters of this model have an anatomical meaning, they simplify visualization of articulatory configurations, and contribute with the inversion techniques in rejecting some groups of unnatural configurations. Equilibrium position of our model, depicted in the above figure, is partially based on Mermelstein’s measurements. As the result of muscular contractions linked to articulation, the model may leave its equilibrium configuration, and ideally take on some articulatory configuration correspondent to a specific phoneme; therein lies the goal of inversion. In this respect, our articulatory vector p will group several supraglottal muscles whose activity alters the state of the midsagittal outline. In numerical terms, there are no complete and definitive data on this muscular activity and its kinematic effects. We may consider the muscular activity as a real number bordered by 0.0 and 1.0, denoting null and maximum muscular activity, respectively. General effects of muscular contractions are taken from approximations in the literature. Certainly, in order to cover the articulatory space, I like to store the following 12 muscles into p:

- Middle Pharynx Constrictor: This muscle influences horizontal displacement of the hyoid bone, and its surrounding zones, approximately by 0.5 cm.

- Masseter(MA): Raises the jaw, following an angle up to 0.15 radians relative to temporomandibular joint.

- Mylohyoid (MH): Descends the mandible, up to 0.20 radians respect to temporomandibular joint.

- Styloglossus (SG): Retracts the tongue in direction of the styloid process.

- Hyoglossus (HG): Descends and slightly retracts the tongue body.

- Anterior Genioglossus (GGa): Descends the tongue tip.

- Middle Genioglossus (GGm): Moves tongue body forward, and slightly downward.

- Posterior Genioglossus (GGp): Raises and advances the tongue body.

- Intrinsic tongue muscles (Up): Raise tongue tip.

- Intrinsic tongue muscles (Down): Descend tongue tip.

- Risorius: Spreads the lips, changing the width of the lip opening up to 4.5 cm.

- Orbicularis oris: Acts for lip rounding, pulling the upper and lower lips together (with a respective maximum vertical change of 1 cm) and protruding them (up to 2.0 cm).

In upcoming posts, we’ll continue our review of articulatory speech synthesis. And I have yet to provide the references.

Update (2010-12-10): You may be interested in Resources for Articulatory Speech Synthesis and Hints at Speech Inverse Filtering of Fricative Phonemes.

— why is this approach more useful ?

Carlos:

1. Reduction of memory space and bandwidth requirements for storage and transmission of speech signals.

2. Low cost and noninvasive comprehension and recollection of data on phonatory processes.

3. Speech recognition, by means of transition to the articulatory domain, where signals may be characterized by fewer parameters.

4. Retrieving the best parameters for synthesis of high-quality speech signals.

Is there any articulatory model beside Mermelstein model?

And, why the most popular articulatory model is Mermelstein model?

Thanks.

Hi, arie.

Mermelstein’s model is very popular because it metrics are simple yet effective. I used such model in my doctoral research, but some widely used systems use it too:

* Paul Boersma’s PRAAT includes a synthesizer based on Mermelstein’s model.

* D. G. Childers synthesis toolbox also uses it.

There are other articulatory models besides Mermelstein’s. Currently, there are some very interesting 3D models.

Your model is very intersting, now you should move on to 3d models. What about equations in such case?